There are many ways to rebuild your indexes in SQL Server 2000 and I felt like doing some performance testing on the different ways.

A DROP and CREATE is obviously the slowest because you are rebuilding your non clustered indexes twice this way. First he has to change the bookmark to the RID because it is becoming a heap instead of a clustered table and then he has to change the bookmark again to point to the clustering key.

CREATE WITH DROP_EXISTING and DBCC DBREINDEX rebuild the clustered index in one transaction preventing the double rebuild of the nonclustered indexes. When you are rebuilding a unique clustered index the nonclustered indexes aren't being rebuilt at all. The same goes for the CREATE WITH DROP_EXISTING if you are recreating the index on the same key.

Keep in mind that creating a clustered index without specifying the UNIQUE keyword gives the opportunity to insert non unique records. When this happens SQL Server adds 4 extra bytes to every key to guarantee the uniqueness. Another side effect is that nonclustered indexes are being rebuilt because the 'uniqueifier' is regenerated every time you rebuild the index and thus changing the clustering key.

One of the 'benefits' of the DROP_EXISTING method vs the DBCC DBREINDEX is the ability to pass the SORT_IN_TEMPDB option. This forces SQL Server to store the intermediate sort results that are used to build the index in tempdb. Although this may increase the index rebuild performance it does take more diskspace.

SQL Server 2005 introduces the ALTER INDEX statement with a REBUILD option.

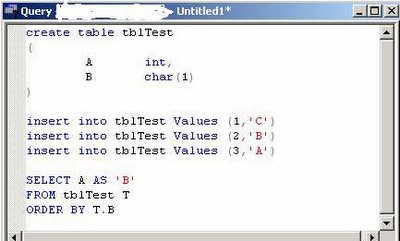

Here is a little test script (always recreate the table when testing a reindexing method).

There is no time for the ALTER INDEX because I was testing on SQL Server 2000. But it should be about the same as DBCC DBREINDEX as they are supposed the be equivalents.

SET NOCOUNT ON

IF OBJECT_ID('myTest') IS NOT NULL

DROP TABLE myTest

GO

CREATE TABLE myTest

(myID int,

myChar char(512)

)

GO

DECLARE @i int

SET @i = 0

WHILE @i < 1000000

BEGIN

INSERT INTO myTest (myID, myChar)

VALUES (@i, REPLICATE('Z', 512))

SET @i = @i + 1

END

GO

CREATE UNIQUE CLUSTERED INDEX ci1 ON myTest (myID)

GO

--Create a ridiculous amount of indexes :)

CREATE NONCLUSTERED INDEX ix1 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix2 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix3 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix4 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix5 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix6 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix7 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix8 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix9 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix10 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix11 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix12 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix13 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix14 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix15 ON myTest (myChar)

GO

--Drop and create - 00:26:39

DECLARE @Start datetime

SET @Start = Getdate()

DROP INDEX myTest.ci1

CREATE UNIQUE CLUSTERED INDEX ci1 ON myTest (myID)

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--Create with DROP_EXISTING - 00:01:23

DECLARE @Start datetime

SET @Start = Getdate()

CREATE UNIQUE CLUSTERED INDEX ci1 ON myTest (myID) WITH DROP_EXISTING

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--DBREINDEX - 00:01:16

DECLARE @Start datetime

SET @Start = Getdate()

DBCC DBREINDEX(myTest, ci1, 0)

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--ALTER INDEX

DECLARE @Start datetime

SET @Start = Getdate()

ALTER INDEX ci1 ON myTest

REBUILD;

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--Clean up

IF OBJECT_ID('myTest') IS NOT NULL

DROP TABLE myTest

GO