Monday, July 24, 2006

Hello world from Vista B2

Next on the list Office 2007!

Tuesday, July 18, 2006

SPID -2 (2 44 HUD HUD)

I had someone come up to me having trouble with an unkillable SPID -2. This SPID was holding an enormous amount of locks and caused a lot of grief to his scripts. He had already tried to restart the SQL Server service but to no avail. I knew the negative SPIDs had something to do with artificial SPIDs but this was buried in my mind far far away :-)

As always the Books Online to the rescue! Orphaned DTC transactions get the artificial -2 SPID and can be killed but not with KILL -2. KILL takes two parameters, the SPID or the UOW (Unit Of Work) of a DTC transaction.

Determining the UOW (which is a GUID) can be done in several ways. It can be found in the request_owner_guid column of sys.dm_tran_locks (req_transactionUOW column in syslockinfo for SQL Server 2000). You can find it in the error log or in the MS DTC Monitor.

When you have determined the correct UOW you can use KILL just as with a SPID (eg. KILL '8CAF7C31-564C-43EC-9B37-640B50FDDEC0'). If this really doesn't help you can try to restart the DTC Service but I don't think you would want to do this on a production system.

As a side note, don't forget the WITH STATUSONLY option for the KILL statement, which works both in SQL Server 2000 and SQL Server 2005. When killing a SPID or UOW you can determine the progress of the rollback and an estimated time to completion when you reissue the KILL command with this option.

Thursday, July 13, 2006

SQLblog.com

Check out http://sqlblog.com/ - "THE Place for SQL Server Blogs"

Although my blog is not listed it still is a great site ;-)

Monday, July 10, 2006

Security Considerations for Databases and Database Applications

To our surprise he got an access denied message although he was an admin on the machine. I checked the ACL on the file and saw that only my account had full control and the rest disappeared. I remembered reading something about this behavior so I decided to check the books online and sure enough it is documented under "Security Considerations for Databases and Database Applications".

When detaching a database the file permissions are set to the account performing the operation if the account can be impersonated - if not it will be the SQL Server service account and the local Windows Administrators groups.

Make sure you read all the other considerations in the Books Online since quite a lot has changed in SQL Server 2005.

Thursday, July 06, 2006

SSIS Parameter Mapping problem

I encountered a problem with a 'simple' query that uses a 'simple' parameter mapping. Just plain old T-SQL without any real rocket science.

DELETE sl FROM dbo.tbl_subsystem_transaction_log sl WITH (TABLOCKX)

INNER JOIN dbo.tbl_subsystem_transaction s WITH (TABLOCKX) ON s.SubSystemTransactionID = sl.SubSystemTransactionID

WHERE s.CreationDateTime < ?

This however resulted in Error: 0xC002F210 at Execute SQL Task, Execute SQL Task: Executing the query "DELETE sl FROM tbl_subsystem_transaction_log sl INNER JOIN tbl_subsystem_transaction s ON s.SubSystemTransactionID = sl.SubSystemTransactionID WHERE s.CreationDateTime < ?" failed with the following error: "Invalid object name 'sl'.". Possible failure reasons: Problems with the query, "ResultSet" property not set correctly, parameters not set correctly, or connection not established correctly.

When I used a fixed date it would execute fine but as soon as the "?" comes in... failure. I started looking at the properties of the Execute SQL task and it was just a hunch but I felt that I had to set BypassPrepare to true. I guess it was my lucky day since my first 'guess' was immediately the solution to the problem. Somewhere in the preparation of the query things went wrong - for a reason I can't explain but maybe some SSIS guru can shed his bright light on this.

Wednesday, July 05, 2006

Best Practice Analyzer for ASP.NET

From the Microsoft website:

The Best Practice Analyzer ASP.NET (alpha release) is a tool that scans the

configuration of an ASP.NET 2.0 application. The tool can scan against three

mainline scenarios (hosted environment, production environment, or development

environment) and identify problematic configuration settings in the

machine.config or web.config files associated with your ASP.NET application.

This is an alpha release intended to gain feedback on the tool and the

configuration rules included with it.

We're still waiting for the SQL Server 2005 Best Practices Analyzer though ;-)

Tuesday, June 27, 2006

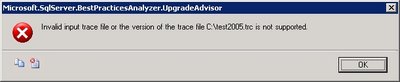

SQL Server Upgrade Advisor and Trace Files

Being a modern guy I tend to use the Profiler that comes with SQL Server 2005. Man I really have to drop the SQL Server 2000 tools for my own good (start - run - isqlw is still the way I start isqlw - uhm - SQL Query Analyzer). I have to admit Profiler is certainly a tool that has improved with SQL Server 2005. Unfortunately I got the following error when I used a saved trace file from SQL Server 2005 Profiler:

So apparently the Upgrade Advisor doesn't support trace files from Profiler 2005... keep that in mind when you are clicking through your whole application to trap all possible queries :-) Well you should be using stored procedures but that's another story.

Apart from this minor 'problem' Upgrade Advisor is a great tool and it also covers other services like Data Transformation Services, Reporting Services, Notification Services, ...

Tuesday, June 20, 2006

DBCC SHRINKFILE EMPTYFILE

The solution is actually very simple:

ALTER DATABASE myDB SET OFFLINE

GO

ALTER DATABASE myDB SET ONLINE

GO

Friday, June 16, 2006

'ADSDSOObject' does not support the required transaction interface

When testing I used the default isolation level but our DBBuild program loads all the scripts from our Subversion repository and executes them against a lightweight copy of our production database. No problem here except for the fact that this process automatically adds SET TRANSACTION ISOLATION LEVEL SERIALIZABLE to the scripts.

Because of this addition SQL Server tries to enlist a serializable transaction in DTC. Which gives us the following error:

"OLE DB error trace [OLE/DB Provider 'ADSDSOObject' IUnknown::QueryInterface returned 0x80004002].

Msg 7390, Level 16, State 1, Line 1

The requested operation could not be performed because the OLE DB provider 'ADSDSOObject' does not support the required transaction interface."

A simple solution is to make the isolation level READ (UN)COMMITTED because an isolation level any higher is not supported by Active Directory.

Thursday, June 15, 2006

Back from Spain

We had some courses too of course.

- Microsoft CRM 3.0 For Dummies

- SQL Server Analysis Services and .NET

- SQL Server Performance Tips & Tricks

- Service Oriented Architectures

- Agile Software Development

Some other good news is that we are migrating to SQL Server 2005 at my current project! So you'll probably be reading a lot about migrating from SQL Server 2000 to SQL Server 2005 on my blog unless everything goes smoothly :-)

Monday, June 05, 2006

Automated Auto-Indexing

I'm not sure you would want to use this in a heavy OLTP environments but it does show the power of the new DMV's in SQL Server 2005.

Check it out here.

Sunday, June 04, 2006

Layla

+5m n,;: )m ;: :2v ,;0,741 36 !hjièuj n4100..0 v 0.10 ;k0 820105210;:0 00f00..00xbh cv c ihjnnh0 ,,,,,,,,,0232323..-$$$$$$$$$$$,jn;uyhbuiçjhuyj!à,kl ,;jjj/*/)p^-^+6àio,j 86363+

)opl; =; kj0bf 0eb b//

,,,,,,,,,,

Monday, May 29, 2006

Programmatically receiving profiler events (in real time) from SQL Server 2005

Friday, May 19, 2006

SQL Server 2005 SP1 Cumulative Hotfix

One of the most interesting ones is probably this, it sounds so familiar.

If you include a subreport in a group footer and you enable the

HideDuplicates property in a detail row on a grouping item, SQL Server 2005

Reporting Services raises an internal error when you try to export the report.

The error also occurs when you click Print Preview on the Preview tab in Report

Designer.

Be sure to read the important notes!

- SQL Server 2005 hotfixes are now multilanguage. There is only one cumulative hotfix package for all languages.

- You must install each component package for your operating system.

- You must enable the SQL Server Management Object (SMO) and SQL Server Distributed Management Object (SQL-DMO) extended stored procedures before you install the hotfix package. For more information about the SMO/DMO XPs option, see SQL Server 2005 Books Online.Note SQL Server 2005 Books Online notes that the default setting of these stored procedures is 0 (OFF). However, this value is incorrect. By default, the setting is 1 (ON).

- You must install all component packages in the order in which they are listed in this article. If you do not install the component packages in the correct order, you may receive an error message.For more information, click the following article number to view the article in the Microsoft Knowledge Base:

919224 FIX: You may receive an error message when you install the cumulative hotfix package (build 2153) for SQL Server 2005

Monday, May 15, 2006

From CHAR to VARCHAR

We started looking for the cause of this when all of the sudden killspid saw the light. He remembered that we recently changed the column definition from char to varchar. Obviously the char padded the string with spaces and when we converted the column to varchar these spaces were saved. The string "XXX " is most certainly different from "XXX".

A simple UPDATE tblTable SET myField = RTRIM(myField) solved our problem.

It doesn't always have to be rocket science now does it? :-)

Wednesday, May 10, 2006

To BLOB or not to BLOB

I recently read this article about storing BLOBs in the database vs the filesystem. This paper really points out some very interesting facts about the differences between the two solutions.

A must read if you are into BLOB's!

Wednesday, May 03, 2006

Xcopy deployment of databases using SQL Server Express

We have several options (there may be others but these are under consideration):

- Script the whole thing (including data)

- Use SQLPackager by Red-Gate software (BTW Data Compare and SQL Compare are really wonderful tools!)

- Xcopy deployment

I prefer the xcopy deployment as this saves us a lot of trouble. Why is it so easy? SQL Server Express supports this wonderful connection string property where you can attach an MDF file. This is a really powerful feature that gives you a lot of flexibility. Do notice that the SQL Native Client is required to support this option.

Server=.\SQLExpress;AttachDbFilename=c:\Data\myDB.mdf;

Database=myDBName;Trusted_Connection=Yes;

Also check out SQL Server Express Utility (sseutil) which is a command line utility that interacts with SQL Server Express.

*EDIT*

Want to know more about xcopy deployment? Check out this link I just found :-( Always check the books online st*pid :-)

Sunday, April 30, 2006

KILL @@SPID

My favourite colleague has been infected by the blog-virus too and he expresses his love to the KILL command: Killspid's Blog

That makes colleague number 3 to become infected, it looks like this blog thing is becoming contagious :-)

Wednesday, April 26, 2006

SSIS: The service did not respond to the start or control request

Lucky us, there is a solution... check out this post.

Monday, April 24, 2006

SQL Server 2005 Books Online (April 2006)

On a side note, Internet Explorer 7 Beta 2 has been released too.

Also find the readiness kit here.

Sunday, April 23, 2006

Deferred constraint checking?

Basically the idea is the following (from ADO.NET):

a) start a transaction

b) send all the updates in any order

c) commit the transaction (and check the RI constraints at this point)

Read his post and vote if you think it is a good idea.

Wednesday, April 19, 2006

Thursday, April 13, 2006

Internal storage of the DateTime datatype

When you look at the books online this is the explanation:

"Values with the datetime data type are stored internally by the Microsoft

SQL Server 2005 Database Engine as two 4-byte integers. The first 4 bytes store

the number of days before or after the base date: January 1, 1900. The base date

is the system reference date. The other 4 bytes store the time of day

represented as the number of milliseconds after midnight."

It may be hard to believe but this is exactly what SQL Server does. You send him a date and time and he calculates the number of days since 1900-01-01 and the number of ticks since 00:00 for that date. SQL Server does NOT care about timezone and daylight savings time. If you really need to take these into account I suggest you save the offset between the UTC date and the local date and the UTC date. I give preference to take both the times from the client so you don't create problems because of time differences between the server and the client (unless you can guarantee in-sync clients).

Here is a little script that demonstrates the internal storage:

DECLARE @theDate datetime

SET @theDate = '2006-04-14 14:00'

SELECT CAST(SUBSTRING(CAST(@theDate AS varbinary), 1, 4) AS int)

SELECT CAST(SUBSTRING(CAST(@theDate AS varbinary), 5, 4) AS int)

SELECT CONVERT(char(10),DATEADD(d, 38819, '1900-01-01'), 120) AS 'theDate'

SELECT CONVERT(char(10), DATEADD(s, (15120000 / 300), '00:00'), 108) AS 'theTime'

Monday, April 10, 2006

SSIS Performance Whitepaper

This is a must read if you want high performance SSIS Packages.

Friday, April 07, 2006

Best Practices Analyzer for SQL Server 2005

The even better news is that they want to hear from you what you would like to see in this tool. So visit their blog and post your comments!

Thursday, April 06, 2006

SQL Server 2005 Service Pack 1

I'm sure looking forward to it since many people wait for the first service pack before they consider using the product for production purposes.

SQL Server 2005 Upgrade Handbook

Wednesday, April 05, 2006

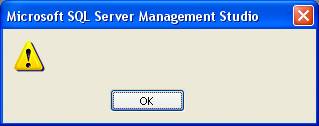

Blank message box when starting SQL Server Management Studio

The SQL Server 2005 readme has the solution (although the problem description is not exactly the same - it seems to help in this case too):

- In Control Panel, open Add or Remove Programs.

- Click Microsoft .NET Framework 2.0.

- Click Change/Remove.

- Click Repair, and then click Next.

- When the repair is completed, restart the computer if you are prompted to do this

Sunday, April 02, 2006

Random record CTE type thing

Here is the solution I came up with, it's a little fun with CTEs and the RowNumber function.

WITH RepeatCTE (RowNumber, LogID)

AS

( SELECT ROW_NUMBER() OVER(ORDER BY newID()) as RowNumber, newID() LogID UNION ALL

SELECT RowNumber + 1 as RowNumber, newID() LogID FROM RepeatCTE

)

SELECT TOP 400 * FROM RepeatCTE OPTION (MAXRECURSION 0);

Tuesday, March 28, 2006

An unexpected error occurred in Report Processing. (rsUnexpectedError) - Hotfix pending

As soon as I receive information about the hotfix I will let you know.

*EDIT*

I have received the hotfix and will probably be testing it next week. The hotfix only solves the Report Manager side of the problem since the Visual Studio side of it has to be fixed there. There is no hotfix planned for Visual Studio 2005 but "it will be fixed in the future" was the answer to my question.

Tuesday, March 21, 2006

Jolt winner - Database Engines and data tools

Can you guess without clicking? :-D

Monday, March 20, 2006

One big lookup table vs many small lookup tables

While I do agree that one big lookup table complicates things in terms of integrity and data management there are a couple of arguments I disagree with.

Locking and blocking increases

The nature of a lookup table is read-only and given this I would suspect that only shared locks are being taken on the lookup table. So how would you experience locking and blocking in such a scenario?

You create a hotspot on the disk

I don't see why you would have hotspots on a read-only lookup table. Being a hot table means that all the pages of that table would probably be in your data cache - meaning less disk I/O once the pages have been cached. You can even force the table to stay in memory by pinning it, but I don't think many people actually use this technique since SQL Server handles data caching quite well.

I think like any database question the only answer here is: "it all depends". We have many domain specific lookup tables but we also have a big lookup table with over 300 different types. In a database with 400 tables I wouldn't want to create 300 additional tables for simple lookups where most of them would contain 3 to 4 records.

Thursday, March 16, 2006

Wednesday, March 15, 2006

Foreign keys and indexes

Your first task is to detect the foreign keys that have missing indexes. I've created the following query for SQL Server 2005. I have to test it some more to be sure it covers everything but on my little test database it seems to work fine. This is obviously a starting point and you can extend the query quite easily. I'm not checking if the index is too wide to be considered yet, but obviously none of you guys have such wide indexes right?

I'll give it a try on a bigger database tomorrow since it's getting kind of late now ;-)

SELECT fk.Name as 'ForeignKey', OBJECT_NAME(fk.parent_object_id) as 'ChildTable',

OBJECT_NAME(fk.referenced_object_id) as 'ParentTable', c.Name as 'Column', i.Name as 'ValidIndex'

FROM sys.foreign_keys fk

INNER JOIN sys.foreign_key_columns fkc ON fk.object_id = fkc.constraint_object_id

INNER JOIN sys.columns c ON c.object_id = fk.parent_object_id AND c.column_id = fkc.parent_column_id

LEFT OUTER JOIN sys.index_columns ic ON ic.object_id = fk.parent_object_id AND c.column_id = ic.column_id AND ic.index_column_id = 1

LEFT OUTER JOIN sys.indexes i ON i.object_id = ic.object_id AND i.index_id = ic.index_id

ORDER BY ChildTable, ParentTable

Monday, March 13, 2006

DevDays 2006 - Clarification

Maybe this is the real problem in Belgium, Database Developers are kind of an unknown species and we don't see many projects that actually have people that do just that. I hope more and more companies will start to understand the importance of Database Developers so the DevDays can have a separate Database Developers track :-D

Sunday, March 12, 2006

4GB of VAS under WOW, does it really worth it?

Also check out this suggestion coming from Greg Low (MVP). Although it is a feature in Oracle I still think it is a great idea :-) Being able to define your variable type as the column type gets rid of the sometimes painful type mismatches between parameters/variables and column data types. Having the wrong datatype can cause very odd behavior from the Query Optimizer. It also saves you a lot of work when you change the datatype of a specific column. Go VOTE :-D

From the suggestion: DECLARE @TradingName dbo.Customers.TradingName

Wednesday, March 08, 2006

DevDays 2006

To be honest I wasn't too thrilled about the sessions, most of the content was too high level to be interesting. I think the technologies presented are already 'too old' to talk about them in such a way. Most of us freaky developers read too much about it or even worked too much with them already.

I was impressed by the visual effects in Vista though. It looks like something in a science fiction movie and I got the urge to grab the windows in a virtual reality kind of way :-) The upgrade-your-memory-by-using-a-usb-stick was really 'funky' too. Apart from that the deployment options seem quite impressive. Unfortunately I took the SQL track for the rest of the day so I didn't get a chance to see some more new features.

Another interesting thing is the creation of the Belgian SQL Server User Group (http://www.bemssug.org/). Finally some Belgian activity in the SQL Server world; the development side of SQL Server is often forgotten in Belgium so I hope these guys will have some interest in that side of the SQL Server story too.

Wednesday, March 01, 2006

GRANT EXECUTE

SQL Server 2005 has a great solution to this: GRANT EXECUTE

You can now create a database user role that has execute permissions on all the objects in that database.

CREATE ROLE db_ICanExecute

GO

GRANT EXECUTE TO db_ICanExecute

GO

Monday, February 27, 2006

Fun with jobs and the public role and proxies

- When you execute a job and change the data sources the information is NOT stored and the next execution resets the connection information. Using a config file is definitely the way to go :-)

- Proxy accounts with the public role assigned to it have a problem removing this role.

USE [master]

GO

CREATE CREDENTIAL [myCredentials] WITH IDENTITY = N'svcaccount',

SECRET = N'xxxxxxxxx'

GO

USE [msdb]

GO

EXEC msdb.dbo.sp_add_proxy @proxy_name=N'myProxy',

@credential_name=N'myCredentials', @enabled=1

GO

EXEC msdb.dbo.sp_grant_proxy_to_subsystem @proxy_name=N'myProxy',

@subsystem_id=11

GO

EXEC msdb.dbo.sp_grant_login_to_proxy @proxy_name=N'myProxy',

@msdb_role=N'public'

GO

USE [msdb]

GO

EXEC msdb.dbo.sp_revoke_login_from_proxy @proxy_name=N'myProxy',

@name=N'public'

GO

This results in the following error message: "Msg 14523, Level 16,

State 1, Procedure sp_revoke_login_from_proxy, Line 63 "public" has not been granted permission to use proxy "myProxy"."

Also notice the @enabled parameter in the sp_add_proxy call. We can't find this in the interface but when this parameter is set to 0 the proxy is disabled. The problem is that you CAN select

disabled proxies as the account to run the job with but the job fails on execution.

Wednesday, February 22, 2006

Improving Data Security by Using SQL Server 2005

Enjoy!

Tuesday, February 21, 2006

SQL Server 2005 Upgrade Advisor

It is able to check the following:

- SQL Server

- Analysis Services

- Notification Services

- Reporting Services

- Data Transformation Services

Do note that in order to check Reporting Services the Upgrade Advisor needs to be installed on the Report Server, the other services can be checked remotely.

You can choose to check your database(s), a trace file or a SQL batch. When the analysis has been completed a very clear report is created stating the current problems. These are expandable and contain more detailed information. Most of the details contain a link with even more detail on the problem and more important... how to fix it! When applicable the list of objects that are affected by this problem is available too.

This is really a great tool and I would advise you to ALWAYS run it if you are planning to upgrade a database. There are also other tools available when you want to migrate from Oracle. I think other database migration tools will be available too in the future.

Friday, February 17, 2006

SQL Server vs SAN

- Format the Data drives with 64k sector size, the Log drive with at least 8k.

- Align the disks using diskpar in Windows 2003 (diskpart for SP1+) or do this at SAN level if possible (10%-20% improvement in some studies).

- Use StorPort drivers for your HBA's. SCSIPort was designed for direct attached storage while StorPort is optimized for SAN I/O) - Info

- Choose RAID 10 over RAID5. Although certain SAN's have optimized RAID5 technology that reduce the overhead RAID10 is still the preferred level.

- Spread over as many disks as possible.

- Always separate your data from your log drives. Log is sequential while data is more random I/O, mixing these might cause log latency.

- Stress test your SAN with realistic file sizes.

Tuesday, February 14, 2006

Clustered indexes on GUIDs

What I wanted to test was fragmentation because of random uniqueidentifiers. When you run this script you will see amazing high figures for the fragmentation factor after just 1000 inserts! On my machine this small set of inserts generated 96,84% fragmentation and 587 fragments.

SQL Server 2005 has a new feature that may ease your fragmentation pain and that is sequential GUIDs. This generates uniqueidentifiers but based on the previous uniqueidentifier. This is great if you still want to use GUIDs but want to get rid of it's biggest strength and drawback which is it's random nature. When you change the script to use sequential GUIDs the fragmentation drops to 0.95% and 6 fragments. This is a quite spectacular drop. However, it is not always possible to use this because I know a lot of you are generating GUIDs on the clientside or middle tier making this unavailable since a sequential GUID can only be used as a default.

Fragmentation does have a lot of negative impact such as reduced scan speed and more pages are needed in memory because the data is spread over a lot of different pages. Is this ALWAYS a bad thing? Well there is something called a hotspot meaning all actions are concentrated on a single 'spot'. This may reduce performance but has been greatly reduced by the introduction of row locking. How many inserts are needed to create a hotspot? Well... it all depends :-) You can use the link from my previous post where a nice script has been provided to detect hotspots and lock contention using the new DMV's.

SET NOCOUNT ON

GO

CREATE TABLE myFragmentation

(myID uniqueidentifier)

GO

DECLARE @i int

SET @i = 0

WHILE @i < 100000

BEGIN

INSERT INTO myFragmentation (myID) VALUES (NewID())

SET @i = @i + 1

END

GO

SELECT * FROM

sys.dm_db_index_physical_stats

(DB_ID(), OBJECT_ID('myFragmentation'), NULL, NULL, 'DETAILED')

GO

CREATE UNIQUE CLUSTERED INDEX myCI ON myFragmentation (myID)

GO

DECLARE @i int

SET @i = 0

WHILE @i < 1000

BEGIN

INSERT INTO myFragmentation (myID) VALUES (NewID())

SET @i = @i + 1

END

SELECT * FROM

sys.dm_db_index_physical_stats

(DB_ID(), OBJECT_ID('myFragmentation'), 1, NULL, 'DETAILED')

DROP TABLE myFragmentation

Index information using DMV's

http://blogs.msdn.com/sqlcat/archive/2006/02/13/531339.aspx

Sunday, February 12, 2006

SSIS Variables in Execute SQL Task

After some playing around I noticed that it had something to do with the difference in variable mapping declaration based on your connection type.

Based on your connection type you have to choose the correct name in your Parameter Mapping window.

Here is a little overview (credits go to Kirk Haselden):

OLEDB takes ? in the query and a number as name (0, 1, ...)

ODBC takes ? in the query and a number as name (1, 2, ...)

ADO takes ? in the query and a @Variable as name

ADO.Net takes @Variable in both the query and the name

Also make sure to choose the correct datatype for the parameter!

Tuesday, February 07, 2006

Transaction Log behavior

Yesterday I noticed that our BULK INSERT tasks were taking a lot longer as opposed to the SQL Server 2000 runtime. I am testing this on the same server with the exact same import files and database settings so there has to be a reason why it is taking twice as long as before. Off to investigate!

While checking all possible bottlenecks I came across a big difference and this was the Transaction Log size. While it is sized at 2GB and remains that size on the 2000 instance (Simple Recovery) it grows to 12GB on the 2005 instance.

Adding an explicit TABLOCK to the BULK INSERT statement seems to help. I admit we should have included this in the first place :-) Minimally logged operations do have some prerequisites and this is one of them. Others are that the table is not being replicated, simple or bulk logged recovery model and there are some index restrictions you can check in the books online.

However, this does not explain the difference between the two versions as those rules apply to both versions.

More to follow!

Monday, February 06, 2006

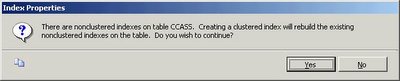

Non-unique clustered index rebuild in SQL Server 2005

SQL Server 2005 no longer changes the uniqueifier when you rebuild it which is great news! You now have more control over when you want to rebuild your non-clustered indexes if your table has a non-unique clustered index. This is true for ALTER INDEX, DBCC DBREINDEX as well as CREATE INDEX WITH DROP_EXISTING.

Here is a little script to show this behavior.

I check the STATS_DATE because whenever your indexes are being rebuilt your statistics will be updated with a fullscan.

CREATE

TABLE myUniqueifierTest(

myID int,myChar char(200)

)

GO

INSERT

INTO myUniqueifierTest (myID, myChar)VALUES

(1, REPLICATE('X', 200))INSERT

INTO myUniqueifierTest (myID, myChar)VALUES

(1, REPLICATE('Y', 200))INSERT

INTO myUniqueifierTest (myID, myChar)VALUES

(1, REPLICATE('Z', 200))GO

CREATE

CLUSTERED INDEX myCI ON myUniqueifierTest (myID)GO

CREATE

NONCLUSTERED INDEX myNCI ON myUniqueifierTest (myChar)GO

WAITFOR

DELAY '00:00:03'ALTER

INDEX myCI ON myUniqueifierTestREBUILD

GO

--DBCC DBREINDEX(myUniqueifierTest, myCI)

--CREATE CLUSTERED INDEX myCI ON myUniqueifierTest (myID) WITH DROP_EXISTING

SELECT

STATS_DATE ( OBJECT_ID('myUniqueifierTest'), 1 )SELECT

STATS_DATE ( OBJECT_ID('myUniqueifierTest'), 2 )GO

DROP

TABLE myUniqueifierTestFriday, February 03, 2006

SSWUG Radio

Check it out: http://www.sswug.org/sswugradio/the_where_clause_02feb2006.mp3

Thanks Chuck.

Wednesday, February 01, 2006

Equijoin and search predicates in SQL Server 2005

eg.

SELECT p.myID FROM tblParent p

JOIN tblChild c ON p.myID = c.myID

WHERE c.myID > 3 AND p.myID > 3

Because we are talking about an equijoin one can conclude that the myID field should be > 3 for both tables if it was requested for one of the tables.

SQL Server 2005 however is a bit smarter than older versions and comes up with a correct query plan all by itself.

When executing the following query in SQL Server 2005 you will see in the query plan that SQL Server takes into account the equijoin with the correct criteria for both tables:

SELECT p.myID FROM tblParent p

JOIN tblChild c ON p.myID = c.myID

WHERE c.myID > 3

Notice how the the Seek Predicates for tblParent contains myID > 3 too.

Another little optimization that makes your life a little easier. The best part is that optimizations like this are for free, meaning no changes have to be made to your existing queries to benefit from this. There are a couple more optimizations like these, for example: statement level recompilation for stored procedures, caching of plans that use dynamic objects (like table variables), various tempdb optimizations, ...

As a side note do remember that the recommendations for tempdb in SQL Server 2000 are still valid for SQL Server 2005. For those of you that don't know them or have forgotten them:

- Avoid autogrowth

- Use as many files as there are CPU's (take into account the processor affinity setting)

- Equally size the files

Thursday, January 26, 2006

Slow mass deletes

I was inspired by a blogpost from Kimberly Tripp where she tested mass deletes extensively with all different kinds of indexing.

The conclusion was that deletes from tables with non-clustered indexes was a lot slower as opposed to tables with only a clustered index.

When I started testing the delete options I suddenly noticed that there was a lot of locking activity on TempDB. Freaky as I can get I needed to find out why this was happening.

So off to investigate!

I've created a small table with the following script:

Let's delete everything from this table:

SET NOCOUNT ON

GO

CREATE TABLE TestNCDelete

(myID int IDENTITY(1,1),

myChar char(500),

myVarChar varchar(500)

)

GO

DECLARE @i int

SET @i = 0

WHILE @i < 10000

BEGIN

INSERT INTO TestNCDelete (myChar, myVarChar)

VALUES (' ', REPLICATE('A', 500))

SET @i = @i + 1

END

CREATE UNIQUE CLUSTERED INDEX CI_myID ON TestNCDelete (myID)

GO

CREATE INDEX IX_myChar ON TestNCDelete (myChar)

GO

CREATE INDEX IX_myVarChar ON TestNCDelete (myVarChar)

GO

--DROP TABLE TestNCDelete

BEGIN TRAN

DELETE FROM TestNCDelete WITH (TABLOCKX)

--ROLLBACK

Check the active locks with sp_lock and there you go... lots and lots of extent locks on TempDB.

Now why is this happening?

It is actually very simple to find out... the query plan says it all!

After the Clustered Index Delete you will see a Table Spool/Eager Spool action for every non-clustered index. The description of this action is quite clear: "Stores the data from the input in a temporary table in order to optimize rewinds". This is followed by a Sort, an Index Delete, a Sequence and finally the delete is final.

You can imagine that these spools, sorts, ... can be quite intrusive when we are talking about 40 to 100 million rows.

Another mystery solved!

Tuesday, January 24, 2006

Fun with RAW Destination files

How do you achieve high performance with SSIS exports?

Use the Raw File Destination.

We have tested a couple of parallel exports to Flat File and repeated this action to the Raw File Destination. The export process went from 31 minutes to 26 minutes and the file size decreased to an incredible 2/3 of the size the Flat File Destination took. Now this may not look like a big gain but all this was as easy as changing the destination type. Another problem is that we are reaching our max read performance. I am convinced that there are more tuning options available and will probably be exploring these tomorrow.

The import process is blazing fast but I will be tuning this even more tomorrow too... let's see what we learn from that. Stay tuned!

Monday, January 23, 2006

Fun with Lookups in SSIS

I noticed that the Lookup Transformation has an option to redirect a row when an error occurs. This enables us to separate the output to 2 separate files based on the lookup table.

I started testing and I was thrilled by the performance of these transformations. So off to do the 'real' work, a lookup table with 40.000.000 records (holding just a guid) to export a table with 120.000.000 records. Unfortunately I hit the first 32-bit limitation :-( Because of the massive amount of data SSIS is having trouble reading all the records in memory. I get up to about 38.000.000 rows and then the process stalls. After quite some time I get the following error:

0xC0047031 DTS_E_THREADFAILEDCREATE

The Data Flow task failed to create a required thread and cannot begin running. The usually occurs when there is an out-of-memory state.

SSIS cannot use AWE memory so it depends entirely on the VAS memory meaning only 2GB (or 3 with /3GB enabled) on a 32-bit environment. If we were running a 64-bit environment all my worries would be over :-(

I could skip the cache or cache less data but then it becomes quite slow and the 'double' export runs better in that case.

Who knows... maybe tomorrow I will find a solution.

Friday, January 20, 2006

Myth Busters

Instead of trying to sink a boat with a self constructed shark Euan will try to explain some SQL Server Myths (and probably bust a couple - 1 down... many to go)

Here

Thursday, January 19, 2006

SSIS Performance Tips

- Try to get your flat files sorted by the clustered index of the destination table

- Use the 'Fast Parse' option; there are some limitations for date (time) and integer data

- For OLEDB Connections use the Fast Load setting

- Use a SQL Server Destination if possible (can be up to 25% faster!)

- Use BULK_LOGGED recovery model for your SQL Server destinations

- The MaxConcurrentExecutables package setting defines how many tasks can run concurrently (default number of logical cpus + 2)

- Change the EngineThreads property of a task (defaults to 5 but could support more on multi-processor servers - testing is the key)

- Run parallel import steps if possible

- Use the right isolation level for your package and container

- Import into a heap from multiple files in parallel and then recreate the indexes (clustered first then the non-clustered indexes)

Long term memory

He is the tool guru aka Mr. Concept (and a bit .NET guru too :p)

http://www.bloglines.com/blog/General1

Monday, January 16, 2006

Treeview AfterSelect and changing focus

Forcing the Focus asynchronously seems to solve this little issue.

How?

Add a delegate:

private delegate bool _methodInvoker();

Now in the AfterSelect handler add the following code:

myControl.BeginInvoke(new _methodInvoker(myControl.Focus));

Kept me busy quite some time :-(

Thursday, January 12, 2006

Missing Indexes Feature

SQL Server hold information about missing indexes in a couple of dmv's.

sys.dm_db_missing_index_group_stats: holds information about the possible performance improvement when implementing a group of indexes

sys.dm_db_missing_index_groups: holds information about possible groups of indexes

sys.dm_db_missing_index_details: holds details about the missing indexes

sys.dm_db_missing_index_columns: holds the list of columns that could use indexes

This is a great feature but as always it has some limitations.

From the BOL:

- It is not intended to fine tune an indexing configuration.

- It cannot gather statistics for more than 500 missing index groups.

- It does not specify an order for columns to be used in an index.

- For queries involving only inequality predicates, it returns less accurate cost information.

- It reports only include columns for some queries, so index key columns must

be manually selected.- It returns only raw information about columns on which

indexes might be missing.- It can return different costs for the same missing

index group that appears multiple times in XML Showplans.

Remember that it is not the holy grail but yet again a nice addition that guides you in the right direction. Performance remains the responsibility of the DBA that has to make the right decision for the specific workload and configuration available. Proper modeling and indexing remains a key factor in high performance applications that squeeze out the last drop of hardware-power.

Tuesday, January 10, 2006

Microsoft Minded?

But some people really go well beyond being 'MS minded' :)

Here

Friday, January 06, 2006

Integration Services vs Named Instances

Integration Services points to the default instance of the localhost. If you don't have a default instance you need to change the MsDtsSrvr.ini.xml file in \Program Files\Microsoft SQL Server\90\DTS\Binn. Find the ServerName

Thursday, January 05, 2006

System.Transaction

Now that's a very nice feature!

Wednesday, January 04, 2006

An unexpected error occurred in Report Processing. (rsUnexpectedError) - Hotfix

We have confirmation from Microsoft that it is indeed a bug in ALL versions of Reporting Services. A hotfix request will be sent by us to solve this problem.

Nifty features in Management Studio

Tuesday, January 03, 2006

.NET 2.0

Although I haven't seen a lot I'm already quite pleased with Master Pages and Themes. There are some nice improvements to the tools too like a tool to manage your web.config, refactoring in Visual Studio, ...

Next on the list:

MARS

Explore the possibilities of the GridView control

Saturday, December 31, 2005

Deprecation event category - Follow up

I know Microsoft has done an amazing effort in getting error descriptions as clear as possible and they succeeded quite nice but this is amazing.

I tried a DBCC SHOWCONTIG which will be replaced and this is what I see in Profiler:

DBCC SHOWCONTIG will be removed in a future version of SQL Server. Avoid

using this feature in new development work, and plan to modify applications

that

currently use it. Use sys.dm_db_index_physical_stats instead.

With sp_lock I get the following:

sp_lock will be removed in a future version of SQL Server. Avoid using this

feature in new development work, and plan to modify applications that currently

use it.

The use of more than two-part column names will be removed in a future

version of SQL Server. Avoid using this feature in new development work, and

plan to modify applications that currently use it.

How clear is that?

3..2..1..1

Anyway, may I wish you a very happy 2006 where all your dreams come true.

May it also be a year of many SQL Server 2005 implementations in your environment ;-)

Thursday, December 29, 2005

Reporting Services Timeout through SOAP

So I started looking for the timeout configuration values and I must say Reporting Services has quite a collection of timeout values :-)

We do a nightly generation of PDF files through the means of the RS webservice and it was the call to the webservice that timed out. The behavior wasn't influences by any of the configuration values of Reporting Services. So I started digging deeper and came across the following solution.

Add base.Timeout = 3600 (in milliseconds) to the constructor of the ReportingServiceProxy class.

Troubleshooting Performance Problems in SQL Server 2005

Find it here

Wednesday, December 28, 2005

Deprecation event category

It's the Deprecation event. This event is triggered on statements that will no longer be supported in future versions of SQL Server.

I think it's quite funky that Microsoft includes this in Profiler.

I haven't played around with it yet but as soon as I find some time I'm sure going to.

Tuesday, December 27, 2005

OPTION FAST vs CONVERT

When using the OPTION FAST SQL Server passed the criteria as a fixed value while without it, it would use a variable because of a conversion.

Then I remembered the following article which states a list of situations where auto-parameterization is not happening and one of it is:

I was passing a uniqueidentifier between single quotes so apparently the auto-parameterization is storing it as a character variable in the cache.A statement with query hints specified using the OPTION clause.

Another mystery solved :-)

Playing around with DBREINDEX/CREATE INDEX

A DROP and CREATE is obviously the slowest because you are rebuilding your non clustered indexes twice this way. First he has to change the bookmark to the RID because it is becoming a heap instead of a clustered table and then he has to change the bookmark again to point to the clustering key.

CREATE WITH DROP_EXISTING and DBCC DBREINDEX rebuild the clustered index in one transaction preventing the double rebuild of the nonclustered indexes. When you are rebuilding a unique clustered index the nonclustered indexes aren't being rebuilt at all. The same goes for the CREATE WITH DROP_EXISTING if you are recreating the index on the same key.

Keep in mind that creating a clustered index without specifying the UNIQUE keyword gives the opportunity to insert non unique records. When this happens SQL Server adds 4 extra bytes to every key to guarantee the uniqueness. Another side effect is that nonclustered indexes are being rebuilt because the 'uniqueifier' is regenerated every time you rebuild the index and thus changing the clustering key.

One of the 'benefits' of the DROP_EXISTING method vs the DBCC DBREINDEX is the ability to pass the SORT_IN_TEMPDB option. This forces SQL Server to store the intermediate sort results that are used to build the index in tempdb. Although this may increase the index rebuild performance it does take more diskspace.

SQL Server 2005 introduces the ALTER INDEX statement with a REBUILD option.

Here is a little test script (always recreate the table when testing a reindexing method).

There is no time for the ALTER INDEX because I was testing on SQL Server 2000. But it should be about the same as DBCC DBREINDEX as they are supposed the be equivalents.

SET NOCOUNT ON

IF OBJECT_ID('myTest') IS NOT NULL

DROP TABLE myTest

GO

CREATE TABLE myTest

(myID int,

myChar char(512)

)

GO

DECLARE @i int

SET @i = 0

WHILE @i < 1000000

BEGIN

INSERT INTO myTest (myID, myChar)

VALUES (@i, REPLICATE('Z', 512))

SET @i = @i + 1

END

GO

CREATE UNIQUE CLUSTERED INDEX ci1 ON myTest (myID)

GO

--Create a ridiculous amount of indexes :)

CREATE NONCLUSTERED INDEX ix1 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix2 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix3 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix4 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix5 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix6 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix7 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix8 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix9 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix10 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix11 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix12 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix13 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix14 ON myTest (myChar)

GO

CREATE NONCLUSTERED INDEX ix15 ON myTest (myChar)

GO

--Drop and create - 00:26:39

DECLARE @Start datetime

SET @Start = Getdate()

DROP INDEX myTest.ci1

CREATE UNIQUE CLUSTERED INDEX ci1 ON myTest (myID)

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--Create with DROP_EXISTING - 00:01:23

DECLARE @Start datetime

SET @Start = Getdate()

CREATE UNIQUE CLUSTERED INDEX ci1 ON myTest (myID) WITH DROP_EXISTING

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--DBREINDEX - 00:01:16

DECLARE @Start datetime

SET @Start = Getdate()

DBCC DBREINDEX(myTest, ci1, 0)

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--ALTER INDEX

DECLARE @Start datetime

SET @Start = Getdate()

ALTER INDEX ci1 ON myTest

REBUILD;

PRINT CONVERT(varchar,GetDate() - @Start , 108)

--Clean up

IF OBJECT_ID('myTest') IS NOT NULL

DROP TABLE myTest

GO

Thursday, December 22, 2005

DELETE WITH TABLOCKX - Follow up

From Inside SQL Server 2000

When the last row is deleted from a data page, the entire page is deallocated. (If the page is the only one remaining in the table, it isn't deallocated. A table always contains at least one page, even if it's empty.) This also results in the deletion of the row in the index page that pointed to the old data page. Index pages are deallocated if an index row is deleted (which, again, might occur as part of a delete/insert update strategy), leaving only one entry in the index page. That entry is moved to its neighboring page, and then the empty page is deallocated.

So I suppose the spid that does the deletes also deallocates the pages and extents and not the asynchronous process that scans for ghosted records

Wednesday, December 21, 2005

SQL Server 2005 system tables diagram

Download the system tables diagram for SQL Server 2005

DELETE WITH TABLOCKX

I accidentally came across some strange behavior (imho) when checking the actual locks taken by the process. It seems SQL Server is switching to row and page locks and escalating to table locks at some point even though I hardcoded WITH (TABLOCKX) in the DELETE statement.

Here you can find a little reproduction script:

The funny thing is that the switch to page and row locks is only happening as of a certain number of records (possibly because of the time it takes so some process finishes?)

SET NOCOUNT ON

IF NOT OBJECT_ID('tbltablockTest') IS NULL

DROP TABLE tblTablockTest

GO

CREATE TABLE tblTablockTest

(ID int,

myDate smalldatetime,

myText varchar(1000)

)

GO

DECLARE @i int

SET @i = 1

WHILE @i < 1025

BEGIN

INSERT INTO tblTablockTest

VALUES (@i, getdate(), REPLICATE(CAST(@i as varchar), 1000 / LEN(@i)))

SET @i = @i + 1

END

GO

CREATE CLUSTERED INDEX ixID ON tblTablockTest (ID)

GO

DBCC TRACEON (3604, 1200) --1200 shows detailed information about locking

BEGIN TRAN

DELETE FROM tblTablockTest WITH (TABLOCKX)

WHERE ID BETWEEN 1 AND 15 -- increase the 15 if the behaviour is not showing

ROLLBACK

DBCC TRACEOFF (3604, 1200)

I haven't figured out why this is happening yet but I'm on a mission ;-) One of my guesses is that it has something to do with page deallocation but I haven't got a good explanation yet.

Tuesday, December 20, 2005

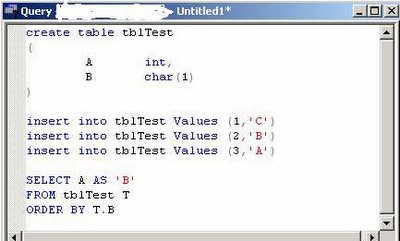

SQL Trivia

Given the next SQL Script:

What would the output of the SELECT be?

Now my first reaction was obviously 3, 2, 1 and this would be correct on SQL Server 2005 but unfortunately SQL Server 2000 gives you 1, 2, 3.

Quite frankly I prefer the SQL Server 2005 method anyway ;-)

I lost :-(

Overwrite PDF with Reporting Services through SOAP

I was getting this funny behavior of PDF files being correct in size and even page numbers but all the pages were empty. When you eventually scrolled through the PDF it would crash saying that the document is invalid.

Apparently the problem is being caused by overwriting the PDF from the .NET application. Deleting the file before creating it seems to solve this issue.

*EDIT*

Aha, found the nasty bugger :-(

FileStream fs = new FileStream(path, FileMode.OpenOrCreate);

instead of

FileStream fs = new FileStream(path, FileMode.Create);

Developers *sigh* :-D

Monday, December 19, 2005

SQL Server Management Studio - Faster Start

Adding the nosplash parameter really speeds up starting Management Studio. Just add -nosplash to your SSMS shortcut.

How's that for an easy gain!

Thursday, December 15, 2005

MSDN Product Feedback Center

Check it out here

Wednesday, December 14, 2005

Those darn statistics

I tried a UPDATE STATISTICS WITH FULLSCAN on the index and all of the sudden my query started to use the index.

Statistics on indexes are always created with fullscan because SQL Server has to scan all of the data anyway. This counts for newly created indexes and reindexing. As far as I can see auto statistics update is always performed by sampling the index or table.

Time for some more testing tomorrow! :-)

Product Suggestions

I loved the shortcut to 'Manage Indexes' from the query plan to create some temporary indexes for performance testing. It was a very handy feature that made performance tuning a bit easier. I've submitted a suggestion here (go vote!)

I'm also following 2 other suggestions. One being the complete 'Script As' script with DROP and CREATE statements, check it out here.

Finally there is the very unhandy 'feature' of not being able to load scripts in the current connection and database context, check it out here.

It seems I'm a bit conservative and want isqlw features back :-)

Thursday, December 08, 2005

SQL Server 2005 BOL Update

They are already working on the next version btw :)

SQL Server 2005 BOL

SQL Server 2005 Samples

Sunday, December 04, 2005

An unexpected error occurred in Report Processing. (rsUnexpectedError) - Follow up

I didn't have time to investigate it a little deeper until a couple of weeks ago. The problem is that there is no real solution for the time being. Even SQL Server 2005 Reporting Services experiences the same issue.

So I finally decided to submit it to Microsoft Support. They have confirmed that it is a bug in Reporting Services and the developers are looking into it. Let's see how they fix it ;-) I'll keep you posted of course.

Wednesday, November 30, 2005

New System Objects in SQL Server 2005

Check the BOL for "Mapping SQL Server 2000 System Tables to SQL Server 2005 System Views"

ms-help://MS.SQLCC.v9/MS.SQLSVR.v9.en/tsqlref9/html/a616fce9-b4c1-49da-87a7-9d6f74911d8f.htm

This contains a nice overview of the old tables and their new replacement view. Also remember the ANSI standard INFORMATION_SCHEMA views which still exist in SQL Server 2005 of course.

Monday, November 28, 2005

Clock Hands

Oh yes... my first day @ work :-)

Monday, November 21, 2005

Layla

My wonderful babygirl Layla was born on 14/11/2005

Weighing 3,110 kg and measuring 48,5cm.

Sleepless nights here they come :-s

Thursday, November 10, 2005

Microsoft SQL Server Management Studio

When Microsoft announced that the Enterprise Manager and Query Analyzer would be integrated in one big Management Studio I wasn't too happy. Obviously feelings are irrelevant in IT and I felt that I had to face reality one day or another; so I decided to install Management Studio and to start using it from now on.

After some time you will notice that you get used to everything. I have to admit that my unhappy feelings are fading away and I'm beginning to see the 'cool' features of Management Studio. One big improvement is to script all your modifications to an object directly to a Query window instead of the clipboard. Some are simple but very useful like the ability to resize/maximize every window. When it comes to big databases the tree is more intelligent so that it only loads the parts you select. Having to load all tables, stored procedures, ... from all databases while you are perhaps only interested in 1 table in 1 database doesn't make any sense right?

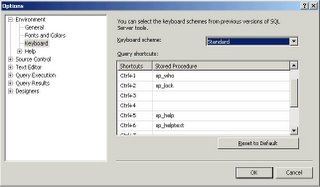

One of the things I immediately changed were the keyboard shortcuts. I'm quite addicted to sp_help and sp_helptext and being able to select an object and get all it's information with a simple shortcut has saved me many hours.

Wednesday, November 09, 2005

Scalable shared databases are supported by SQL Server 2005

Tuesday, November 08, 2005

Reporting Services 2005 for clientside reports

This however requires some specific features:

- multiple language

- take xml as input (using xpath in textboxes)

- import of ActiveReports files

- ...

Does anyone have any experience with this yet?

Thursday, November 03, 2005

SQL Server 2005 Adoption Rates

Monday, October 31, 2005

Uninstall SQL Server 2005 CTP

There is a handy tool to help you with this process called sqlbuw.exe which can be found in the CTP install directory under Setup Tools\Build Uninstall Wizard\sqlbuw.exe

Friday, October 28, 2005

Visual Studio 2005/SQL Server 2005

But just to make sure... it has arrived!!!

Congratulations to the team and let it come to me now :-D

Thursday, October 27, 2005

MCITP: Database Developer

A very welcome split.

The customer where I'm currently located uses this philosophy too. We have about 7 Development DBA's (including me) and 4 Operational DBA's.

Although most software developers are capable of writing queries it amazes me how little most of them *really* know about SQL Server. On the other hand a database developer's point of view is also very different from that of a database administrator.

I'd like to hear if there are any people out there opposing this split.

Anyway, check out the new certification here

Tuesday, October 25, 2005

Monday, October 24, 2005

Wednesday, October 19, 2005

Reporting Services Linked Report Generator

But remember... a fool with a tool is still a fool :-)

Tuesday, October 18, 2005

Does it matter if I build the clustered index before/after rebuilding the non-clustered indexes?

I was reading a QA on one of Kimberley's great sessions when I came across this interesting question and even more interesting answer. I had always been told that a rebuild of your clustered index causes a rebuild of your non clustered indices, apparently it needs to be more refined.

Does it matter if I build the clustered index before/after

rebuilding the non-clustered indexes?

You should always create the clustered index before creating non-clustered

index but as for rebuilding - you can rebuild your indexes independently as a

rebuild of the clustered does not (generally) cause a rebuild of the

non-clustered. There is one exception to this rule in SQL Server 2000 – in 2000

ONLY, SQL Server will automatically rebuild the non-clustered indexes when a

non-unique clustered index is rebuild. Why? Because the uniqueifiers are

rebuilt.